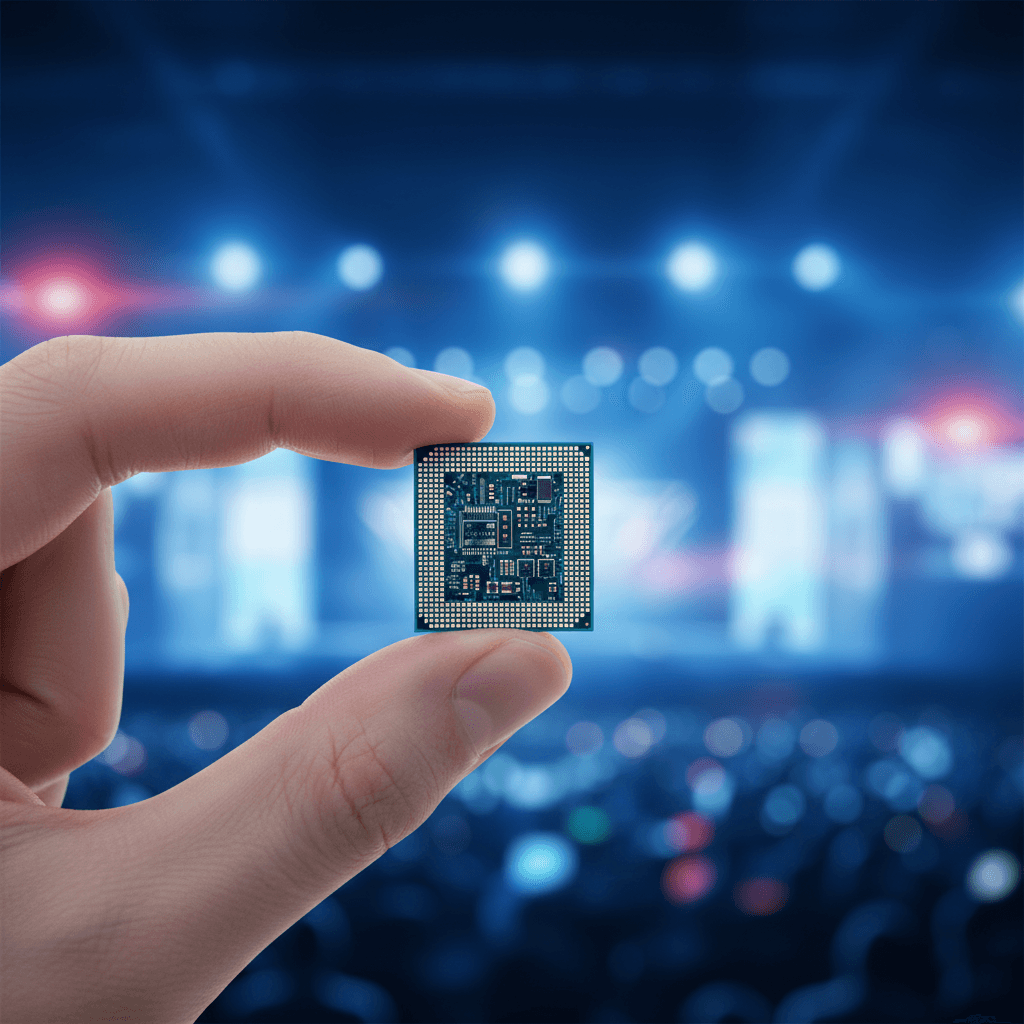

The headline writes itself: Arm makes its first chip. After three decades of licensing designs to the world's biggest technology companies, the Cambridge-born firm held up its own silicon on a stage in San Francisco and dared its partners to keep smiling.

They did, mostly. Meta is the lead partner and co-developer. OpenAI, Cerebras, Cloudflare, SAP, and SK Telecom have all signed on as customers. Nvidia's Jensen Huang, Amazon's James Hamilton, and Google's Amin Vahdat appeared in pre-taped video testimonials. The chip is called the AGI CPU, it runs on 136 Neoverse V3 cores fabbed by TSMC at 3nm, and Arm says it will reach full production in the second half of this year.

But the real story is not that Arm built a chip. The real story is why the world's most successful design licensor decided that licensing alone could no longer capture enough value from the AI buildout, and what that tells us about where the semiconductor industry is actually headed.

The licensing trap

Arm's business model has been elegant for decades. Design once, license everywhere. Apple, Qualcomm, Samsung, Amazon, Nvidia, Microsoft, Tesla: all of them pay Arm for the privilege of building on its architecture. Arm collects royalties on every chip shipped. By the 2010s, Arm-based processors ran in virtually every smartphone on the planet.

That model worked because the phone market was enormous and fragmented. Hundreds of manufacturers needed Arm designs. Nobody wanted to build from scratch.

AI data centers are different. The customers are fewer and richer. They are already designing their own chips: Google has TPUs, Amazon has Graviton and Trainium, Meta has MTIA. When your biggest licensees start doing in-house silicon, the royalty math changes. You are still collecting a fee, but you are watching other companies capture the margins on the hardware that runs the most valuable workloads in computing.

CEO Rene Haas put it plainly at the launch event: "Let me be clear: We are now in a new business for Arm, and we are supplying CPUs." He framed it as customer demand. That is true, but incomplete. Arm is also chasing a market that its own licensing model was never built to fully monetize.

Who benefits

The immediate winners are the companies too small or too focused to design their own data center CPUs. Arm's cloud AI head Mohamed Awad told CNBC the goal is to serve companies that cannot afford in-house processors. That is a real gap. Not every AI startup or enterprise can do what Google and Amazon do.

Meta benefits too, but differently. The company is both a customer and a co-developer, committed to "multiple generations" of the AGI CPU roadmap according to Arm's official announcement. Meta's infrastructure chief Santosh Janardhan appeared on stage and talked about needing more silicon for "personal superintelligence," the deeply personalized AI the company is building into its apps. Meta has reportedly struggled with its own chip program. Partnering with Arm on CPU design while continuing to develop MTIA for inference and training gives Meta a two-track approach to silicon.

The numbers Arm is citing are aggressive. More than 2x performance per rack versus x86 CPUs. Up to $10 billion in capital expenditure savings per gigawatt of AI data center capacity. Creative Strategies, the research firm, forecasts the data center CPU market growing from $25 billion this year to $60 billion by 2030, and closer to $100 billion when agentic AI workloads are factored in.

Even a small slice of $100 billion is a business worth building a chip for.

Who loses

Intel and AMD should be paying close attention. The AGI CPU is designed specifically for the CPU-side orchestration work in AI data centers: coordinating accelerators, managing data movement, handling the token throughput that agentic AI requires. That is exactly the territory where x86 incumbents have lived for decades.

Arm is not being subtle about the target. The company claims its chip eliminates the "overhead and complexity of x86 processors." AWS's James Hamilton reinforced the point in his testimonial by noting that the majority of compute capacity AWS added in 2025 was Graviton, which runs on Arm architecture.

But the more interesting tension is between Arm and its own licensees. Ben Bajarin, CEO of Creative Strategies, told Wired that Arm "could be perceived more as a competitor than partner as its strategy evolves." Right now the AGI CPU is a narrow product, a specialized CPU for agentic AI. But Bajarin noted that Arm may expand into more general-purpose CPUs over time, which would put it in direct competition with customers who have spent years building on its designs.

Notably absent from the congratulatory chorus: Qualcomm, which won what it called a "complete victory" over Arm in their licensing dispute last year. That silence is louder than any press release.

The pattern we have seen before

This move has a precise historical analog. In the early 1990s, Intel shifted from being primarily a memory and contract chip manufacturer into an integrated design-and-fabrication company with its own branded processors. The "Intel Inside" campaign turned a component maker into a platform company. It also turned Intel's former customers into competitors, and it reshaped the entire PC industry for two decades.

Arm is running a version of that play, but with one critical difference. Intel owned its fabs. Arm is using TSMC. That means Arm gets the speed and scale of the world's best foundry without the capital burden of building its own manufacturing. It is an asset-light vertical integration, which is either brilliant or fragile depending on how you think about supply chain concentration.

The deeper structural force here has been building for at least five years. As AI models grew larger and inference costs became the bottleneck, every layer of the stack started pulling toward vertical integration. Cloud companies designed their own chips. Chip companies built their own software stacks. Now the design licensor is building its own chips. The centrifugal force of AI economics is collapsing the old horizontal layers of the semiconductor industry into vertically integrated columns.

What this looks like in five years

Arm will not replace its licensing business. The royalties from smartphones, IoT, automotive, and the rest of the Arm-based universe are too valuable to abandon. What Arm is doing is adding a second revenue line: direct silicon sales for the highest-value, fastest-growing segment of the chip market.

If the AGI CPU delivers on its performance claims, and if Meta's co-development commitment holds across multiple generations, Arm will have a real business selling into AI data centers within two years. The $100 billion market projection for 2030 is an estimate, but the direction is not in doubt. Agentic AI requires more CPUs per rack, and those CPUs need to be power-efficient. That is exactly what Arm has spent 30 years optimizing.

The companies that should worry most are not the hyperscalers. Google, Amazon, and Microsoft will keep building their own silicon regardless. The companies at risk are the mid-tier chip designers who relied on being the bridge between Arm's IP and the data center. That bridge now has a competitor standing on it.

Arm just told the semiconductor industry something it has been whispering for years: if you are going to eat the AI infrastructure pie, you need to be sitting at the table, not just selling the silverware.

Jules Okonkwo covers technology for The Daily Vibe.