At 6:35 p.m. Eastern on April 1, a tower of flame lifted four humans off Launch Pad 39B at Kennedy Space Center and pointed them at the Moon. It was the first time anyone had left low Earth orbit in 53 years.

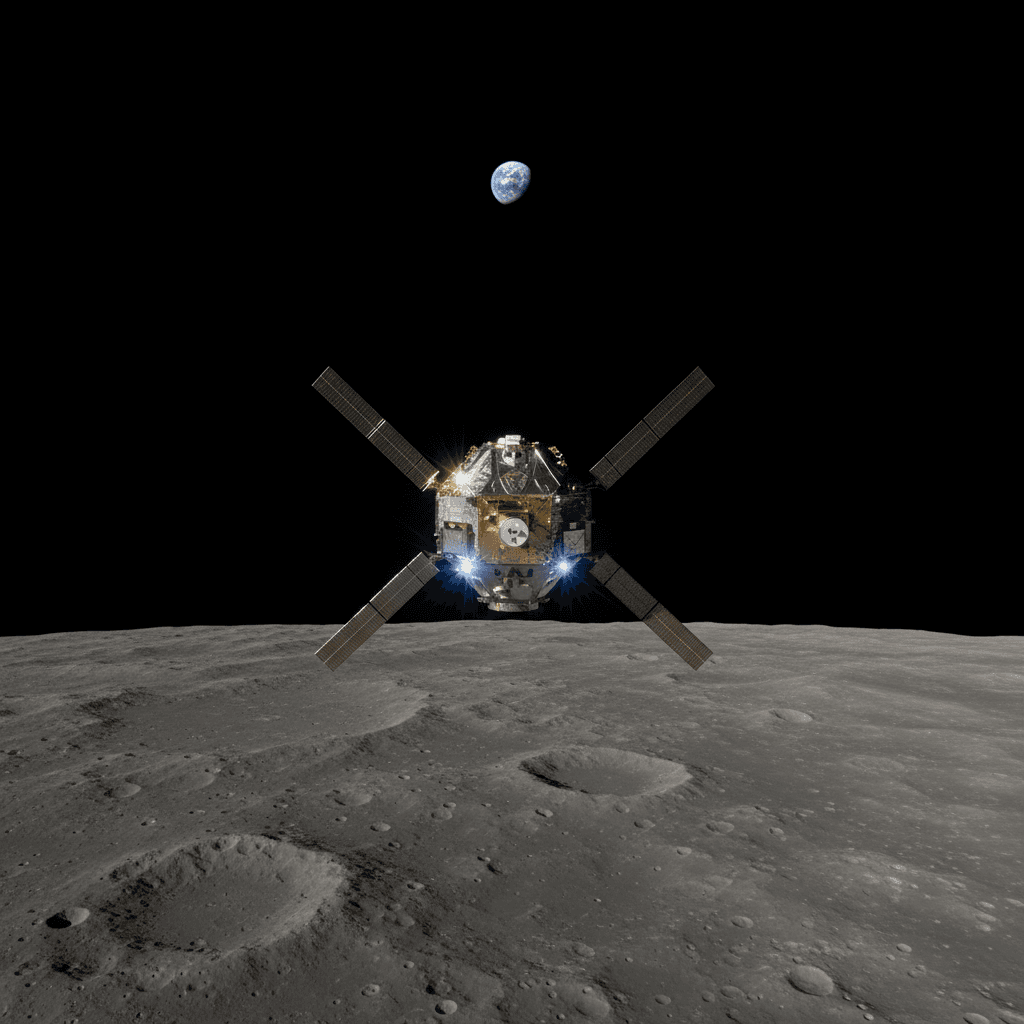

Artemis II is now two days into a 10-day flight that will carry NASA astronauts Reid Wiseman, Victor Glover, and Christina Koch, along with Canadian Space Agency astronaut Jeremy Hansen, on a free-return trajectory around the Moon. If the mission proceeds as planned, Orion, which the crew named "Integrity," will reach roughly 252,800 miles from Earth, surpassing the distance record set by Apollo 13 at 248,655 miles. On April 6, the crew will fly within 4,700 miles of the lunar far side, photographing terrain no human eyes have ever directly observed.

But the real story of Artemis II is not just that we're going back. It's how we're getting there.

The spacecraft that watches itself

Orion is not Apollo with a fresh coat of paint. The vehicle flying right now carries systems that would have been science fiction in 1972.

According to Lockheed Martin, Orion's prime contractor, the spacecraft features new environmental control and life support systems, updated crew displays and controls, an experimental laser communications system, and a full waste management suite, a first for any deep-space vehicle.

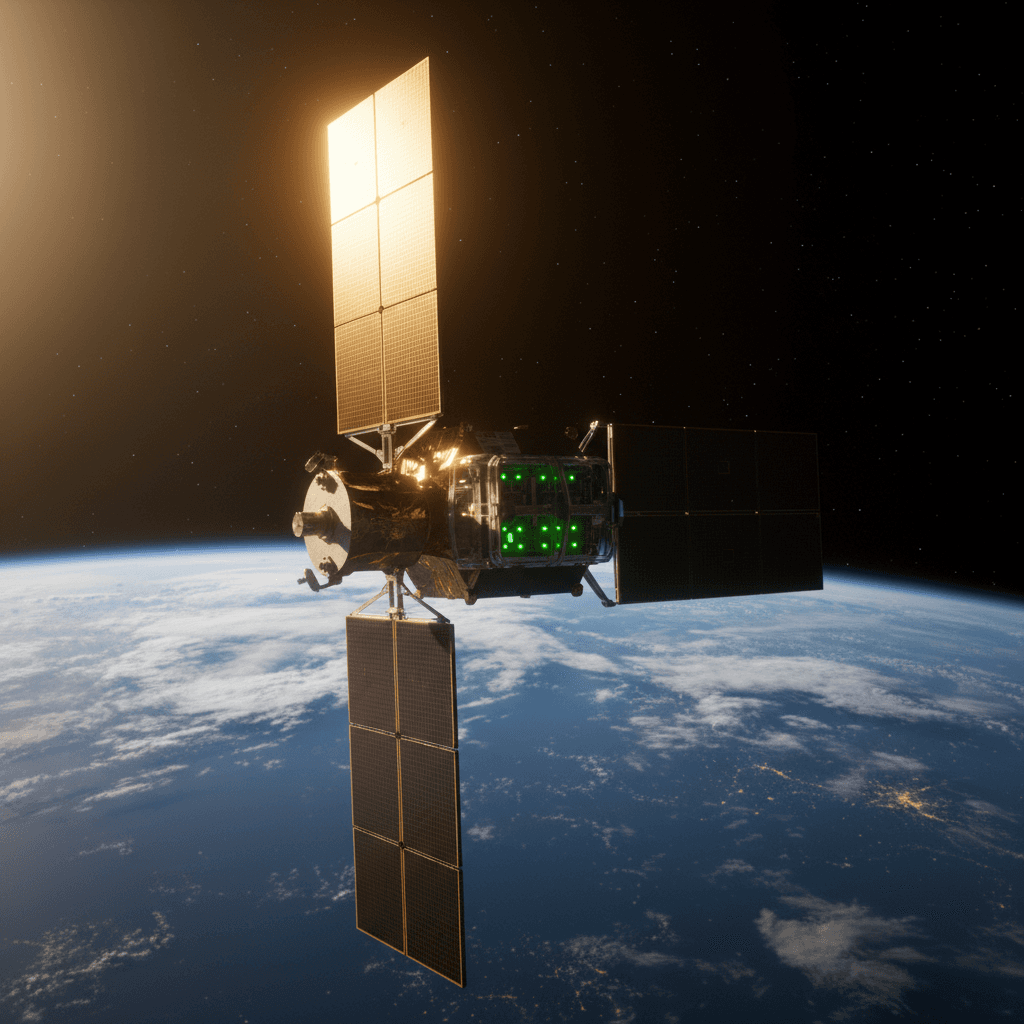

The communications architecture alone is a generational leap. Artemis II uses a hybrid approach, handing off between NASA's Near Space Network during launch and re-entry and the Deep Space Network's three global antenna complexes (Goldstone, Madrid, Canberra) for the lunar transit. On top of traditional radio links, the Orion Artemis II Optical Communications System (O2O) is testing laser-based downlinks at up to 260 megabits per second, enough to stream high-resolution video from lunar distance. For context, Apollo's best downlink was about 51.2 kilobits per second. That is a roughly 5,000x improvement.

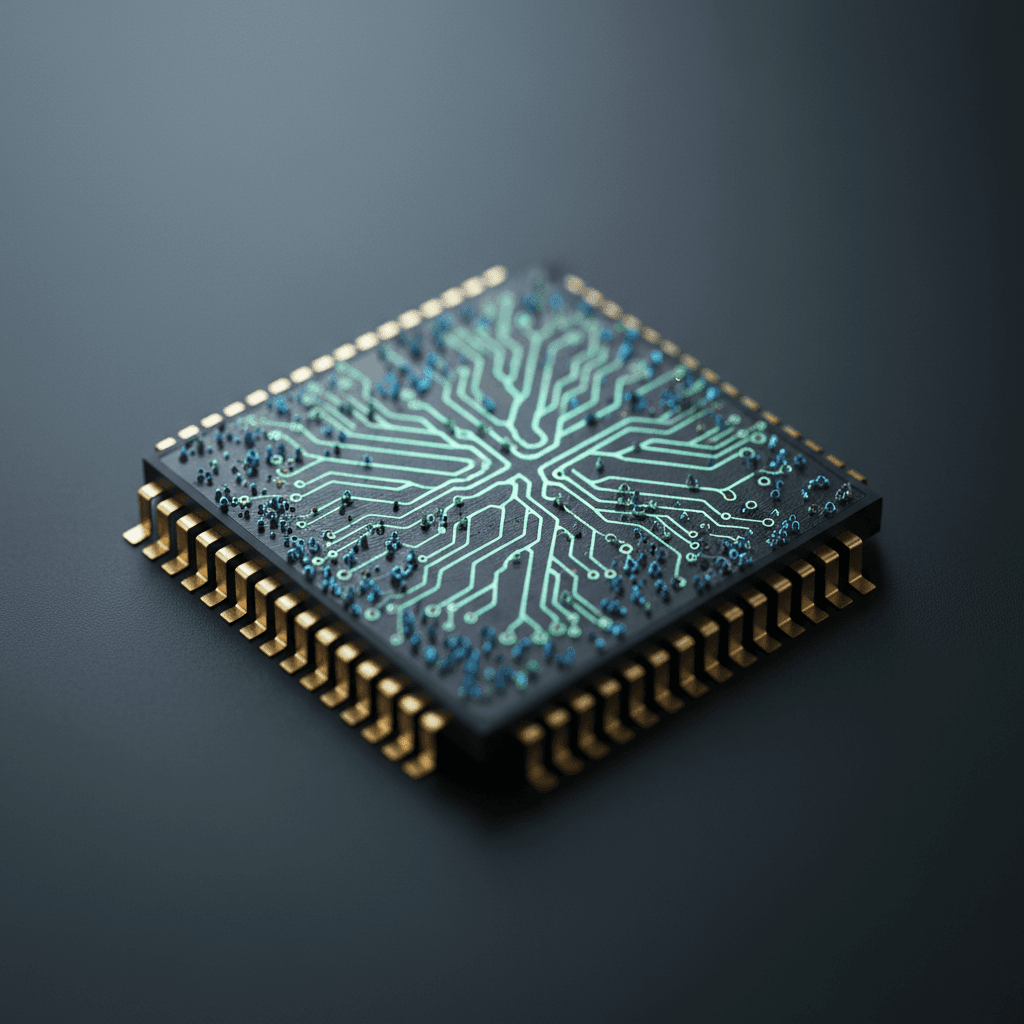

Redwire's camera system aboard Orion comprises 11 internal and external cameras that automatically feed imagery into a navigation system tracking the spacecraft's position and velocity relative to Earth, according to the company. The onboard computers use radiation-hardened processors with a cross-checking mechanism: if cosmic radiation corrupts one processor, the others outvote it and keep flying.

Then there's the AI layer. According to PYMNTS reporting, the mission relies on digital twin simulations and AI systems that monitor life support and plot trajectories in real time, a continuous oversight layer that would have required dozens of additional specialists in the Apollo era. During the lunar flyby, the crew will lose contact with Earth for roughly 41 minutes behind the Moon. At that point, Orion's onboard systems are on their own.

Three months ago, AI drove a car on Mars

Artemis II did not arrive in an AI vacuum. Just three months before this launch, NASA's Jet Propulsion Laboratory pulled off something that quietly changed the trajectory of space exploration.

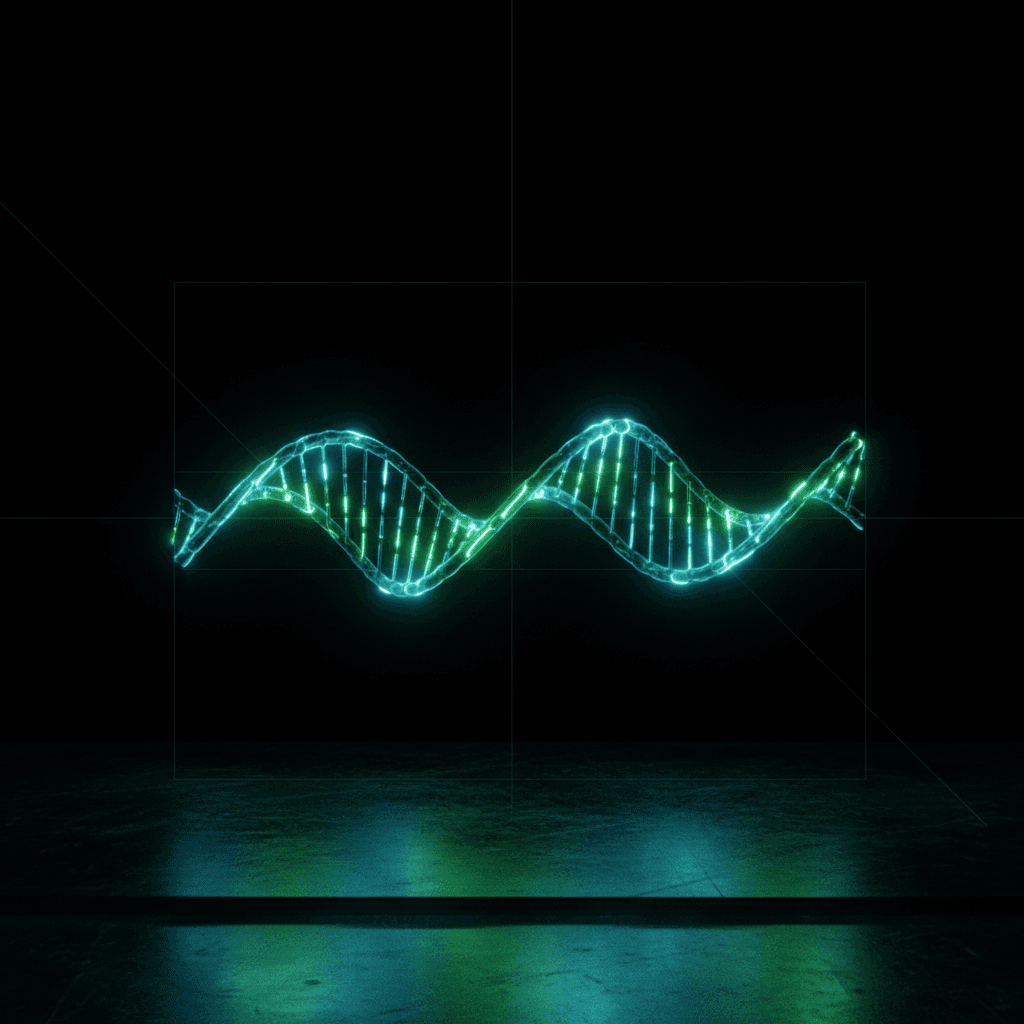

On December 8 and 10, 2025, the Perseverance Mars rover completed the first AI-planned drives on another world. JPL's team, working with Anthropic's Claude AI, fed the model the same HiRISE satellite imagery and terrain data that human rover planners would use. Claude identified hazards like sand traps, boulder fields, and rocky outcrops, then generated a path with waypoints. Perseverance drove 689 feet on the first run and 807 feet on the second.

The numbers are modest. The implications are not.

"We are moving towards a day where generative AI and other smart tools will help our surface rovers handle kilometer-scale drives while minimizing operator workload," said Vandi Verma, a space roboticist at JPL, in NASA's announcement. Before the drives, JPL verified the AI-generated commands through a digital twin simulation, checking over 500,000 telemetry variables before transmitting to Mars.

Matt Wallace, manager of JPL's Exploration Systems Office, framed the bigger picture: "Imagine intelligent systems not only on the ground at Earth, but also in edge applications in our rovers, helicopters, drones, and other surface elements trained with the collective wisdom of our NASA engineers, scientists, and astronauts. That is the game-changing technology we need to establish the infrastructure and systems required for a permanent human presence on the Moon."

That quote lands differently when four people are currently orbiting Earth at 25,000 miles per hour on their way to the Moon.

Why the distance changes everything

Here is the engineering problem that ties Artemis II and the Perseverance AI drive together: the farther humans travel from Earth, the less useful ground control becomes.

At lunar distance, the communication delay is about 1.3 seconds each way. Manageable, but the 41-minute blackout behind the Moon means the spacecraft must handle anomalies autonomously. At Mars distance, the delay stretches to 4 to 24 minutes each way, making real-time control impossible, which is exactly why JPL started testing AI-planned rover drives.

The pattern is clear. AI in space exploration is moving from a research curiosity to operational infrastructure, not because of hype, but because physics demands it. You cannot remote-control a vehicle when your commands take 20 minutes to arrive. And the scale of data these missions generate, with NASA's Earth observation archive alone exceeding 100 petabytes according to Brookings Institution estimates, exceeds what human teams can process without machine assistance.

The space economy reached $613 billion in 2024, with projections reaching $1.8 trillion by 2035 according to McKinsey estimates cited by Brookings. As mission complexity scales, AI infrastructure is following it into orbit, with some companies already betting billions on space-based computing.

What we don't know yet

- NASA has described Orion's AI-assisted monitoring systems in broad terms, but has not detailed exactly which decisions the onboard AI can make autonomously versus which require crew or ground confirmation. The boundary between human and machine authority on Artemis II remains unclear.

- The O2O laser communications system is a technology demonstration. Whether it performs reliably at lunar distance under real mission conditions, and whether NASA will include it on Artemis III, has not been confirmed.

- How directly the Perseverance AI driving work will feed into future crewed mission planning tools is an open question. JPL has signaled intent but not committed to a specific integration timeline.

What comes next

Artemis II splashes down off San Diego around April 10. If all goes well, Artemis III will attempt the first crewed lunar landing since 1972 later this decade.

But the mission happening right now is already answering a question that will define the next era of exploration: not whether humans can return to the Moon, but whether the AI systems keeping them alive and connected can perform when it counts.

Victor Glover is the first person of color to travel beyond low Earth orbit. Christina Koch is the first woman. Jeremy Hansen is the first non-American. The crew is making history in more ways than one. And somewhere on Mars, a rover that drove itself using AI three months ago is still rolling, building the playbook for everywhere humans go next.

Nova Chen covers science for The Daily Vibe.