The Pentagon Made Palantir Its Brain. Now What?

For years, Palantir Technologies operated in the shadow corridors of American military power — visible enough to be controversial, obscure enough to avoid real accountability. That changed on March 20, when Reuters published an internal Pentagon memo that should alarm anyone who thinks carefully about what it means when a private company becomes indistinguishable from a nation's war machine.

Deputy Secretary of Defense Steve Feinberg signed a letter on March 9 designating Palantir's Maven Smart System as an official "program of record" across all branches of the U.S. military. By September 2026, Maven isn't just the Pentagon's primary AI tool — it's baked into the institutional budget, the contracting structure, and the chain of command. This isn't a contract. It's a merger of sorts, without the regulatory scrutiny a merger would normally invite.

What Maven Actually Does

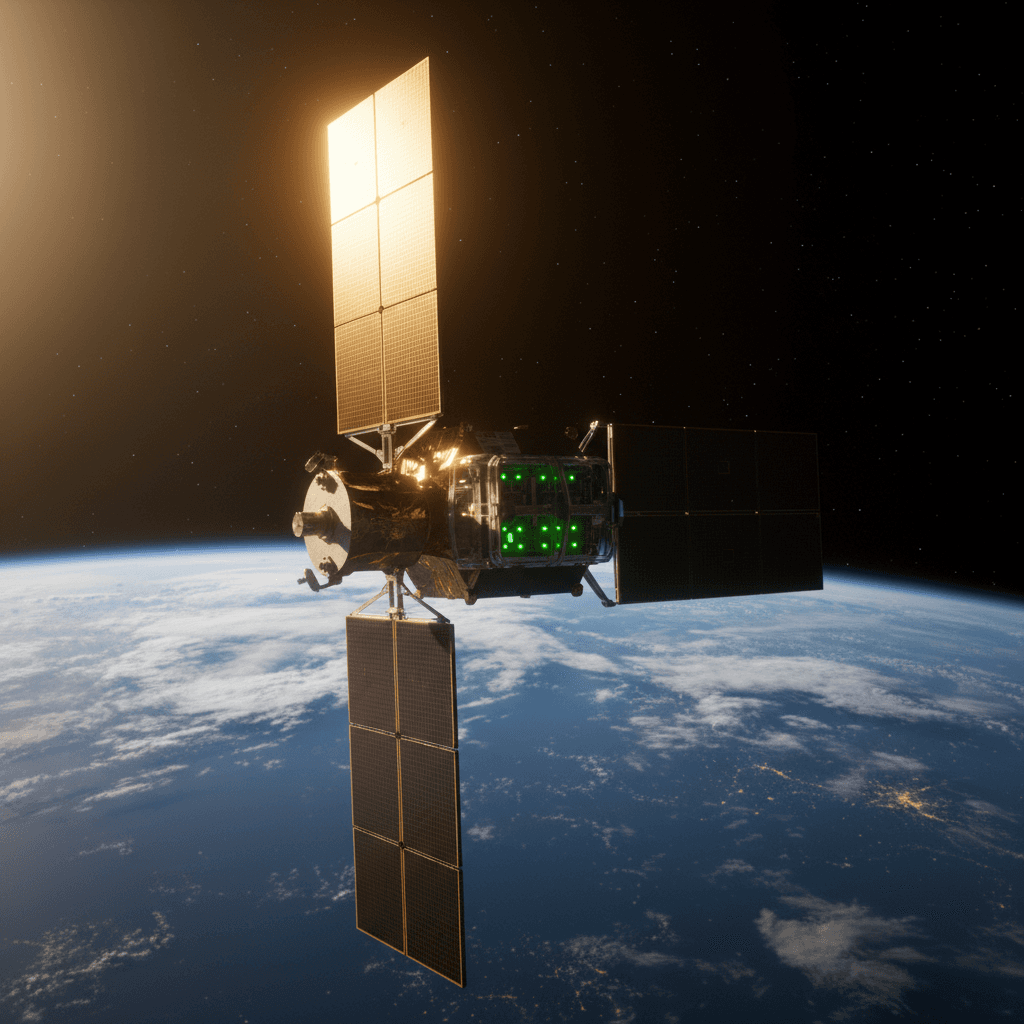

Maven is a command-and-control platform. It ingests battlefield data — feeds from satellites, drones, radars, sensors, intelligence reports — processes it through AI, and hands operators a ranked list of what it thinks are targets. Enemy vehicles. Buildings. Weapons stockpiles. People.

The system has already been central to thousands of U.S. airstrikes against Iran over the past three weeks, according to Reuters. That's not a test environment. That's a live targeting system running at scale during active military operations, and the U.S. government just decided it should be the permanent foundation for how it wages war.

Feinberg's language in the memo is worth sitting with. He wrote that embedding Maven would give warfighters "the latest tools necessary to detect, deter, and dominate our adversaries in all domains." Dominate in all domains. That's the ambition — and it's Palantir's code doing the dominating.

How We Got Here: The Anthropic Fallout

The timing of this announcement isn't coincidental. What Reuters' exclusive didn't fully surface — but Semafor's follow-up reporting did — is that this decision accelerated after a high-profile breakdown between the Pentagon and Anthropic.

Anthropic recently refused to permit its Claude AI model to be used for mass surveillance operations or fully autonomous weapons systems. The Pentagon's response was blunt: it moved to classify Anthropic as a "supply chain risk," which effectively bars other defense suppliers from integrating Claude into their systems.

The uncomfortable irony is that Maven currently uses Claude to analyze the intelligence data it collates. Palantir now has to reengineer parts of its own platform to rip out a model it depends on, because that model's creator had the audacity to draw an ethical line. The engineering challenge is real. The precedent it sets is worse: defense contractors that refuse autonomy over AI use cases get frozen out, and the ones that comply get handed a billion company's worth of institutional lock-in.

The Program of Record Matters More Than the Headlines Suggest

"Program of record" sounds bureaucratic. It's actually the difference between a recurring contract and a permanent fixture. Once a system achieves this designation, it gets folded into the defense budget baseline. It becomes part of multi-year planning cycles. Ripping it out requires an act of Congress — literally.

Feinberg's memo also restructured Maven's oversight chain. The National Geospatial-Intelligence Agency, which had been running Maven, hands off control to the Pentagon's Chief Digital and Artificial Intelligence Office within 30 days. Future contracting runs through the Army. This is centralization, not just adoption — it's designed to make Maven harder to challenge, harder to audit, and harder to remove.

Palantir already landed a deal worth up to billion with the U.S. Army last summer. Its stock has roughly doubled over the past year, pushing its market capitalization near billion. The Maven program-of-record designation is fuel on a fire that was already burning.

The market noticed. PLTR shares jumped 4.4% in afternoon trading on Monday after the news broke, even as insider selling continues at a pace that analysts at TradingKey estimated at nearly million in shares offloaded daily throughout 2026. The executives cashing out while the stock runs — that's not necessarily nefarious, but it's worth tracking.

The Accountability Gap

Here's what concerns me more than the technology itself: there is no publicly accessible framework for what happens when Maven gets it wrong.

AI targeting systems misidentify. It's not a hypothetical — it's a documented pattern across every computer vision system deployed at scale under adversarial conditions. When a Maven-generated target recommendation leads to a strike that kills civilians, who answers for it? Palantir? The Army? The CDAO? The individual operator who approved the strike based on a confidence score they may not fully understand?

The program-of-record designation streamlines adoption. It does not create accountability. And the Anthropic situation demonstrates clearly that the Pentagon's current posture is to punish ethical restraint and reward compliance. If a company's AI team raises concerns about autonomous targeting and the Pentagon's answer is supply chain risk designation, the incentive structure for the entire defense AI sector just shifted — hard — toward silence.

Palantir CEO Alex Karp has been direct for years about his company's worldview: Western democracies need military AI superiority, and Palantir is built to provide it. That's a coherent argument. The engineering is, by most accounts, genuinely impressive. Maven processes data at a speed and scale no human analyst team could match.

But impressive capability and sound governance are not the same thing. The U.S. military is in the process of outsourcing a significant portion of its targeting cognition to a single private company — one that is now, effectively, too embedded to fail. That's a strategic concentration risk on top of every ethical question the technology already raises.

What Comes Next

The September 2026 deadline is the one to watch. That's when the program-of-record designation formally takes effect. Between now and then, Palantir has to solve its Claude dependency problem, the Army takes over contracting, and Maven gets wired deeper into every branch of the military.

Congress will eventually have to weigh in — not because legislators are especially motivated to limit defense AI spending, but because the concentration of this much targeting infrastructure in one private company eventually becomes a national security question on its own terms. What happens if Palantir's systems go down during active operations? What happens if the company faces a cyberattack, a leadership crisis, or a valuation collapse that forces restructuring?

Those aren't edge cases. They're the kinds of risks that "program of record" designations are supposed to account for. Whether the Pentagon actually gamed them out before signing that March 9 memo is a question that deserves a serious answer.

Sources:

- Reuters exclusive: Pentagon to adopt Palantir AI as core US military system, memo says (March 20, 2026)

- Semafor: Pentagon reportedly adopts Palantir as main AI system (March 23, 2026)

- Motley Fool: The Pentagon Just Dropped a Bombshell for Palantir Stock Investors (March 22, 2026)

- Financial Content: Palantir Technologies (PLTR) Stock Trades Up After Maven Designation (March 23, 2026)

- TradingKey: PLTR Market Movers, March 23 (March 23, 2026)