Your CFO just forwarded you an article about AI agents that can buy media autonomously. Your CEO saw a demo at a conference. Someone on the leadership team is now asking why you haven't "deployed agentic AI" yet.

Meanwhile, your team is still reconciling campaign data across three DSPs, two spreadsheets nobody trusts, and a trafficking workflow that depends on one person who's on vacation next week.

This is the gap that matters. Not whether agentic media buying is real (it is, now) but whether your data, processes, and org structure are anywhere close to ready for it. For most teams, the honest answer is not yet. But "not yet" is very different from "not ever," and the preparation work you do in the next six months will determine whether you adopt smoothly or stall for a year.

What AAMP actually is

AAMP stands for Agentic Advertising Management Protocols. IAB Tech Lab formally named the initiative on February 26, 2026, consolidating several separate workstreams that had been causing confusion in the market. It is not a single product. It is an umbrella specification built on three pillars.

The first pillar is execution infrastructure, called ARTF (Agentic Real-Time Framework). This is the plumbing that lets AI agents participate in real-time ad auctions without breaking the timing constraints that programmatic depends on. According to IAB Tech Lab, ARTF uses container-based architecture and cuts latency by up to 80% compared to traditional external API calls. It runs agents inside the host platform's infrastructure rather than pinging external servers, which matters when auction decisions must complete in under 100 milliseconds.

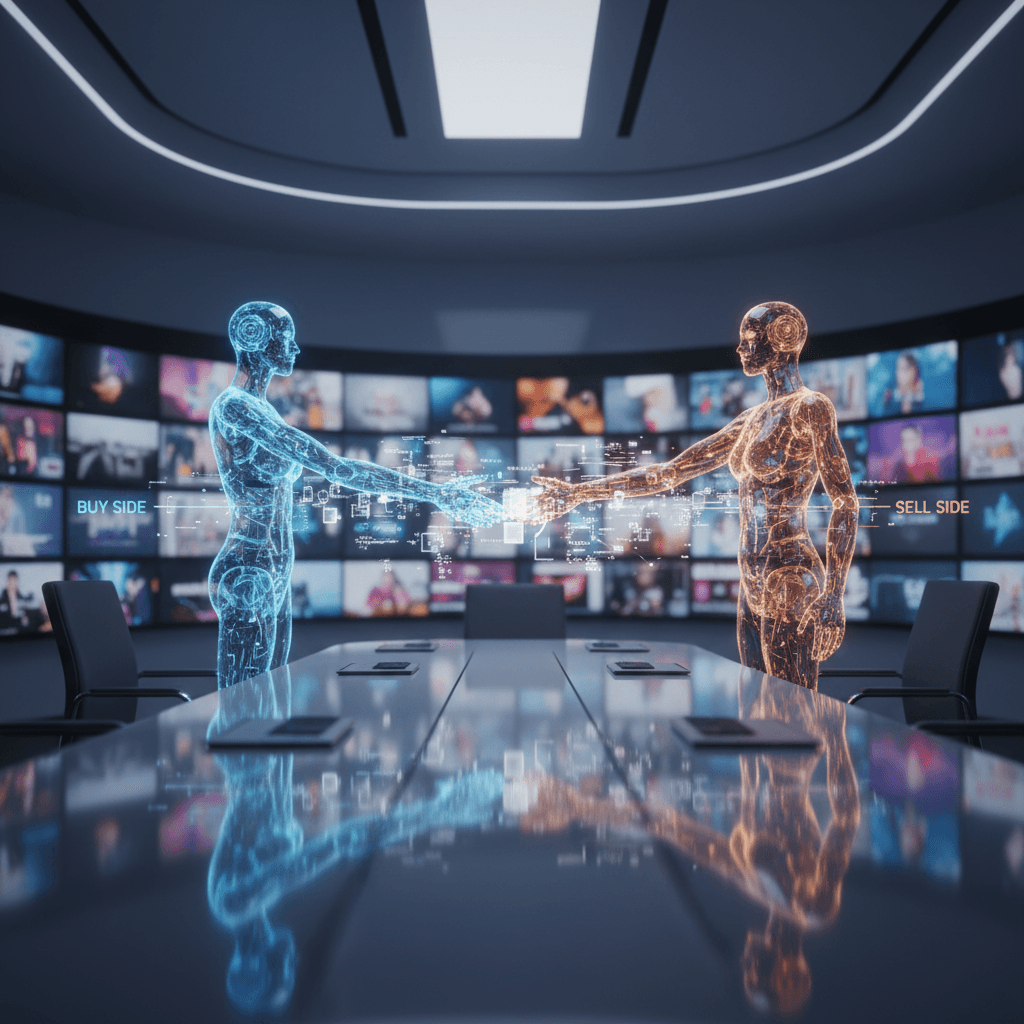

The second pillar is protocols, the standardized schemas that let buyer agents and seller agents understand each other. Think of this as the shared language: how an agent requests inventory, how it structures a deal, how it reports back on performance. IAB Tech Lab built these on top of existing standards like OpenDirect, OpenRTB, and AdCOM rather than starting from scratch.

The third pillar is trust and transparency, anchored by an Agent Registry where agents are identified, verified, and disclosed. This is the part that answers the question your compliance team will ask first: "How do we know what an agent did and why?"

In practical terms, AAMP defines a three-level agent hierarchy. At the top sits a Portfolio Manager handling strategic decisions like budget allocation and channel mix. Below that, Channel Specialists translate strategy into medium-specific tactics (a CTV agent, a display agent, a mobile agent). At the bottom, execution agents handle the actual transactions.

As of March 25, 2026, this is no longer theoretical. Kochava opened its StationOne platform to public beta with 19 specialized skills across 8 functional areas, running on the official IAB Tech Lab reference implementation. The source code for all agent definitions is open source. You can see exactly what the agents do before trusting them with anything. The Daily Vibe covered the technical details of the AAMP spec and StationOne launch when it happened.

What changes for your team

The first thing to understand: agentic buying does not eliminate ad ops roles. It changes what those roles do.

Today, a significant chunk of your team's time goes to campaign setup, trafficking, reconciliation, and optimization adjustments. According to Digiday, Butler/Till ran the first public test of agentic media buying in December 2025 through January 2026 for brewer Geloso Beverage Group, and PubMatic reported a 98% reduction in the time taken to set up a campaign. The test also showed an 82% reduction in supply chain costs (primarily DSP tech fees), a 40% lift in impressions delivered, and a 30% reduction in CPMs, according to results reviewed by Digiday.

Those are early numbers from a controlled test, not guarantees. But they point to the operational shift: agents handle the repetitive execution, and your team moves toward oversight, strategy, and exception handling.

Here is what that means in practice. Your trafficking team becomes an agent-supervision team. Instead of manually building line items, they review what agents propose and approve or reject. Your media planners spend less time pulling inventory availability reports and more time defining the strategic constraints agents operate within. Your analytics function shifts from reporting what happened to defining the success criteria agents optimize against.

This sounds like an upgrade, and it can be. But it requires your people to develop new skills: understanding what agents can and cannot do, reading agent decision logs, knowing when to override. If your team currently operates on muscle memory and institutional knowledge that lives in nobody's documentation, agents will not absorb that knowledge automatically.

What your data needs to look like first

This is where most teams will stall, and it is worth being blunt about it.

A Funnel Marketing Intelligence Report found that 55% of media buyers say they have plenty of data but cannot turn it into actionable insights. Agents do not fix that problem. They amplify it. An agent operating on inconsistent taxonomy, duplicated audience segments, or campaign naming conventions that vary by team will make bad decisions faster than a human would.

Before an agent can buy effectively, you need four things in order.

Consistent campaign taxonomy. Every campaign, ad group, and creative needs to follow a naming and classification structure that a machine can parse. If your team uses free-text campaign names with no enforced convention, start here. This is a one-to-two month project for a team of two, depending on how many active campaigns you are running.

Clean audience definitions. Agents need to translate audience requirements into targeting parameters. If your audience segments live in multiple platforms with different definitions, overlapping membership, or unclear refresh schedules, the agent will either pick the wrong segment or default to broad targeting. Audit your segments. Document what each one actually contains. Expect this to take two to four weeks if you have fewer than 50 segments, longer if you have hundreds.

Reliable conversion and attribution data. Agents optimize against the signals you give them. If your attribution model is last-click in one platform and multi-touch in another, the agent has no consistent definition of success. Pick a source of truth and document its limitations.

API access to your buying platforms. AAMP's protocols run through standardized APIs. If your DSP contracts do not include API access, or if your team has never used the APIs your platforms already offer, that is a prerequisite to sort out. Some platforms charge extra for API access. Budget for it.

The organizational questions nobody is asking yet

When an agent makes a buying decision that goes wrong, who is responsible?

This is not a philosophical question. It is an operational one that your leadership needs to answer before any agent touches a live budget. Here are the questions to put on the table now.

Who owns the agent configuration? Someone needs to be accountable for the strategic constraints, budget limits, and brand safety parameters the agent operates within. This is not an engineering role. It is a senior media ops role with enough context to know what "good" looks like for each client.

Who audits agent decisions? The AAMP Agent Registry provides identity and verification, but internal audit is different from external compliance. You need someone reviewing agent decision logs on a regular cadence, the same way you would review a junior buyer's work. In the Butler/Till test, human staff reviewed and approved the agent's inventory selections before execution proceeded. Expect that human-in-the-loop pattern to persist for at least the next 12 to 18 months.

Who handles exceptions? Agents will encounter situations they are not trained for: a publisher pulling inventory mid-flight, a brand safety incident, a sudden budget reallocation. Your team needs a clear escalation path from agent to human, with defined response times.

What is your liability exposure? If an agent buys inventory on a site that violates your brand safety standards, your contract with the client does not care that a machine made the decision. Legal and procurement need to weigh in on how agent-driven buying fits into your existing service agreements.

Should you pilot now or wait?

Here is a decision framework based on where your team actually is.

Pilot now if your team already has consistent campaign taxonomy, API-connected buying platforms, and at least one person who can read technical documentation and translate it for the ops team. You also need a client or internal stakeholder willing to test on a limited budget with clear success criteria. StationOne's sandbox environment lets you experiment without executing real transactions, which removes the financial risk of early testing.

Wait six months if your campaign naming is inconsistent, your audience segments are poorly documented, or your team has never interacted with your DSP's API. Use that time to fix the foundations. The specification is not going anywhere, and early adopters will surface the implementation problems you can learn from.

Wait twelve months if your buying operation still runs primarily on manual IO-based processes, your data lives in spreadsheets with no API layer, or your organization has not had the ownership and liability conversations yet. Adopting agentic buying on top of manual processes will create more chaos, not less.

One honest caveat: multiple competing approaches to agentic advertising are already emerging alongside AAMP, including AdCP and various vendor-specific frameworks. According to Digiday, without convergence, the industry risks repeating earlier interoperability problems. IAB Tech Lab's spec has the broadest industry backing (the ARTF working group included Amazon Ads, The Trade Desk, PubMatic, Index Exchange, Magnite, and others), but the standards landscape is still settling. Factor that into your timeline expectations.

What month one looks like

If you decide to start preparing today, here is what the first 30 days look like.

Week one: Audit your taxonomy. Pull your last 50 campaigns from your primary DSP. How many follow a consistent naming convention? How many use free-text fields that only make sense to the person who created them? Document the current state. Do not try to fix it yet.

Week two: Map your data flows. Draw the path from campaign brief to live campaign to performance report. Identify every manual handoff, every spreadsheet, every copy-paste step. These are the points where an agent will either need clean structured data or will fail.

Week three: Inventory your API access. Check every buying platform contract for API provisions. Log into developer portals and verify your credentials work. If you have never made an API call, this is when you learn what that means, or identify the person on your team who will.

Week four: Start the ownership conversation. Draft a one-page brief for your leadership that answers three questions: What is agentic buying? What do we need to prepare? Who owns it if we proceed? You are not asking for budget yet. You are establishing that this is a real operational shift that requires planning, not a vendor demo that can be evaluated in a single meeting.

This is also the week to register for the IAB Tech Lab Agent Registry, which is free and open to non-members. Getting your organization registered early gives you visibility into the ecosystem and signals to partners that you are taking the standard seriously.

The conversation you need to have with your leadership on Monday is not "should we buy an AI?" It is: "The industry just published a specification for how AI agents buy media. The first implementations are in open beta. Our competitors will start testing this year. Here is what our data and team need to look like before we can participate, and here is the six-month plan to get there."

That is a conversation about infrastructure, staffing, and timeline. Not hype. Not a demo. And it is the conversation that separates teams who adopt agentic buying smoothly from teams who spend 18 months discovering their data was not ready.

Dana Whitfield covers enterprise AI enablement for The Daily Vibe.